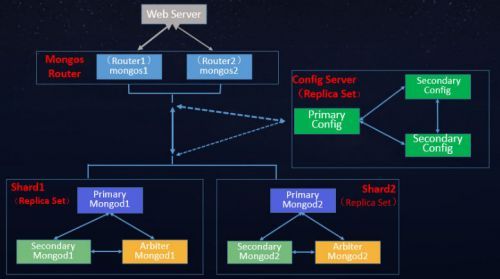

从图中可以看到有四个组件:mongos、config server、shard、replica set。

mongos,数据库集群请求的入口,所有的请求都通过mongos进行协调,不需要在应用程序添加一个路由选择器,mongos自己就是一个请求分发中心,它负责把对应的数据请求请求转发到对应的shard服务器上。在生产环境通常有多mongos作为请求的入口,防止其中一个挂掉所有的mongodb请求都没有办法操作。

config server,顾名思义为配置服务器,存储所有数据库元信息(路由、分片)的配置。mongos本身没有物理存储分片服务器和数据路由信息,只是缓存在内存里,配置服务器则实际存储这些数据。mongos第一次启动或者关掉重启就会从 config server 加载配置信息,以后如果配置服务器信息变化会通知到所有的 mongos 更新自己的状态,这样 mongos 就能继续准确路由。在生产环境通常有多个 config server 配置服务器,因为它存储了分片路由的元数据,防止数据丢失!

shard,分片(sharding)是指将数据库拆分,将其分散在不同的机器上的过程。将数据分散到不同的机器上,不需要功能强大的服务器就可以存储更多的数据和处理更大的负载。基本思想就是将集合切成小块,这些块分散到若干片里,每个片只负责总数据的一部分,最后通过一个均衡器来对各个分片进行均衡(数据迁移)。

replica set,中文翻译副本集,其实就是shard的备份,防止shard挂掉之后数据丢失。复制提供了数据的冗余备份,并在多个服务器上存储数据副本,提高了数据的可用性, 并可以保证数据的安全性。

仲裁者(Arbiter),是复制集中的一个MongoDB实例,它并不保存数据。仲裁节点使用最小的资源并且不要求硬件设备,不能将Arbiter部署在同一个数据集节点中,可以部署在其他应用服务器或者监视服务器中,也可部署在单独的虚拟机中。为了确保复制集中有奇数的投票成员(包括primary),需要添加仲裁节点做为投票,否则primary不能运行时不会自动切换primary。

简单了解之后,我们可以这样总结一下,应用请求mongos来操作mongodb的增删改查,配置服务器存储数据库元信息,并且和mongos做同步,数据最终存入在shard(分片)上,为了防止数据丢失同步在副本集中存储了一份,仲裁在数据存储到分片的时候决定存储到哪个节点。

环境:

[root@node241 ~]# uname -a

Linux node241 3.10.0-957.el7.x86_64 #1 SMP Thu Nov 8 23:39:32 UTC 2018 x86_64 x86_64 x86_64 GNU/Linux

[root@node241 ~]# cat /etc/redhat-release

CentOS Linux release 7.6.1810 (Core)

[root@node240 ~]# rpm -qa | grep mongo

mongodb-org-mongos-4.0.18-1.el7.x86_64

mongodb-org-server-4.0.18-1.el7.x86_64

mongodb-org-shell-4.0.18-1.el7.x86_64

mongodb-org-tools-4.0.18-1.el7.x86_64

mongodb-org-4.0.18-1.el7.x86_64

节点功能清单:

功能节点 主机名: IP 端口

mongos node240 172.16.1.240 27017

config node241 172.16.1.241 27017

config node241 172.16.1.241 27018

config node241 172.16.1.241 27019

sharding node242 172.16.1.242 27017

sharding node243 172.16.1.243 27017

sharding node244 172.16.1.244 27017

yum install lrzsz lsof vim nmap -y

cat >> /etc/rc.local << EOF

echo never > /sys/kernel/mm/transparent_hugepage/enabled

echo never > /sys/kernel/mm/transparent_hugepage/defrag

EOF

1.配置MongoDB的yum源

vi /etc/yum.repos.d/mongodb-org-4.0.repo

[mongodb-org-4.0]

name=MongoDB Repository

baseurl=https://repo.mongodb.org/yum/redhat/7Server/mongodb-org/4.0/x86_64/

gpgcheck=1

enabled=1

gpgkey=https://www.mongodb.org/static/pgp/server-4.0.asc

这里可以修改 gpgcheck=0, 省去gpg验证。

# yum makecache

关闭防火墙:

systemctl stop firewalld

systemctl disable firewalld

systemctl mask firewalld

sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/sysconfig/selinux

setenforce 0

2.安装MongoDB

# yum install -y mongodb-org

systemctl enable mongod

配置Sharding节点(三个节点)并配置Replica Set 集群:

各节点配置文件一样,如下:

[root@node242 mongo]#echo "rs.slaveOk()">>/root/.mongorc.js

[root@node242 mongo]# cat /etc/mongod.conf

# mongod.conf

# for documentation of all options, see:

# http://docs.mongodb.org/manual/reference/configuration-options/

# where to write logging data.

systemLog:

destination: file

logAppend: true

path: /var/log/mongodb/mongod.log

# Where and how to store data.

storage:

dbPath: /var/lib/mongo

journal:

enabled: true

engine: wiredTiger

directoryPerDB: true

# engine:

# mmapv1:

# wiredTiger:

# how the process runs

processManagement:

fork: true # fork and run in background

pidFilePath: /var/run/mongodb/mongod.pid # location of pidfile

timeZoneInfo: /usr/share/zoneinfo

# network interfaces

net:

port: 27017

bindIp: 0.0.0.0 # Enter 0.0.0.0,:: to bind to all IPv4 and IPv6 addresses or, alternatively, use the net.bindIpAll setting.

#security:

operationProfiling:

slowOpThresholdMs: 10

mode: "slowOp"

replication:

oplogSizeMB: 50

replSetName: "rs0"

secondaryIndexPrefetch: "all"

sharding:

clusterRole: shardsvr

## Enterprise-Only Options

#auditLog:

#snmp:

启动服务之前需要删除数据库目录下的所有文件:

[root@node244 ~]# cd /var/lib/mongo

[root@node244 mongo]# ls

collection-0--9142324971473654113.wt index-3--9142324971473654113.wt mongod.lock WiredTiger.lock

collection-2--9142324971473654113.wt index-5--9142324971473654113.wt sizeStorer.wt WiredTiger.turtle

collection-4--9142324971473654113.wt index-6--9142324971473654113.wt storage.bson WiredTiger.wt

diagnostic.data journal WiredTiger

index-1--9142324971473654113.wt _mdb_catalog.wt WiredTigerLAS.wt

[root@node244 mongo]# rm -rf ./*

[root@node244 mongo]# systemctl start mongod #启动服务

[root@node244 mongo]# lsof -i:27017

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

mongod 5880 mongod 11u IPv4 34384 0t0 TCP *:27017 (LISTEN)

在其中一个节点上创建集群:

[root@node242 mongo]# mongo

MongoDB shell version v4.0.18

connecting to: mongodb://127.0.0.1:27017/?gssapiServiceName=mongodb

Implicit session: session { "id" : UUID("96eb8591-b202-404c-9719-094d53efc8db") }

MongoDB server version: 4.0.18

> config={_id: "rs0", members:[{_id:0,host:"172.16.1.242:27017"},{_id:1,host:"172.16.1.243:27017"},{_id:2,host:"172.16.1.244:27017"}]}

{

"_id" : "rs0",

"members" : [

{

"_id" : 0,

"host" : "172.16.1.242:27017"

},

{

"_id" : 1,

"host" : "172.16.1.243:27017"

},

{

"_id" : 2,

"host" : "172.16.1.244:27017"

}

]

}

> rs.initiate(config)

{

"ok" : 1,

"operationTime" : Timestamp(1588068001, 1),

"$clusterTime" : {

"clusterTime" : Timestamp(1588068001, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

rs0:SECONDARY> rs.status();

安装config元数据配置服务器:需要配置三台Replica Set集群

首先要启动配置服务器和mongos,配置服务器需要最先启动,因为mongos会用到其上的配置信息,配置服务器的启动跟普通的mongod一样

第一台配置:

[root@node241 ~]#echo "rs.slaveOk()">>/root/.mongorc.js

[root@node241 ~]# cat /etc/mongod.conf

# mongod.conf

# for documentation of all options, see:

# http://docs.mongodb.org/manual/reference/configuration-options/

# where to write logging data.

systemLog:

destination: file

logAppend: true

path: /var/log/mongodb/mongod.log

# Where and how to store data.

storage:

dbPath: /var/lib/mongo

journal:

enabled: true

# engine:

# mmapv1:

# wiredTiger:

# how the process runs

processManagement:

fork: true # fork and run in background

pidFilePath: /var/run/mongodb/mongod.pid # location of pidfile

timeZoneInfo: /usr/share/zoneinfo

# network interfaces

net:

port: 27017

bindIp: 0.0.0.0 # Enter 0.0.0.0,:: to bind to all IPv4 and IPv6 addresses or, alternatively, use the net.bindIpAll setting.

#security:

#operationProfiling:

replication:

replSetName: configrs0 #配置服务器副本集不得使用与任何分片副本集相同的名称。

sharding:

clusterRole: configsvr

## Enterprise-Only Options

#auditLog:

#snmp:

第二台配置

[root@node241 ~]# cat /etc/mongod_27018.conf

# mongod.conf

# for documentation of all options, see:

# http://docs.mongodb.org/manual/reference/configuration-options/

# where to write logging data.

systemLog:

destination: file

logAppend: true

path: /var/log/mongodb/mongod_27018.log

# Where and how to store data.

storage:

dbPath: /var/lib/mongo_27018

journal:

enabled: true

# engine:

# mmapv1:

# wiredTiger:

# how the process runs

processManagement:

fork: true # fork and run in background

pidFilePath: /var/run/mongodb/mongod27018.pid # location of pidfile

timeZoneInfo: /usr/share/zoneinfo

# network interfaces

net:

port: 27018

bindIp: 0.0.0.0 # Enter 0.0.0.0,:: to bind to all IPv4 and IPv6 addresses or, alternatively, use the net.bindIpAll setting.

#security:

#operationProfiling:

replication:

replSetName: configrs0

sharding:

clusterRole: configsvr

## Enterprise-Only Options

#auditLog:

#snmp:

第三台配置

[root@node241 ~]# cat /etc/mongod_27019.conf

# mongod.conf

# for documentation of all options, see:

# http://docs.mongodb.org/manual/reference/configuration-options/

# where to write logging data.

systemLog:

destination: file

logAppend: true

path: /var/log/mongodb/mongod_27019.log

# Where and how to store data.

storage:

dbPath: /var/lib/mongo_27019

journal:

enabled: true

# engine:

# mmapv1:

# wiredTiger:

# how the process runs

processManagement:

fork: true # fork and run in background

pidFilePath: /var/run/mongodb/mongod_27019.pid # location of pidfile

timeZoneInfo: /usr/share/zoneinfo

# network interfaces

net:

port: 27019

bindIp: 0.0.0.0 # Enter 0.0.0.0,:: to bind to all IPv4 and IPv6 addresses or, alternatively, use the net.bindIpAll setting.

#security:

#operationProfiling:

replication:

replSetName: configrs0

sharding:

clusterRole: configsvr

## Enterprise-Only Options

#auditLog:

#snmp:

开启服务:

[root@node241 ~]# systemctl enable mongod

[root@node241 ~]# systemctl start mongod

确保监听所有的接口地址:

[root@node241 ~]# systemctl restart mongod

[root@node241 ~]# lsof -i:27017

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

mongod 5867 mongod 11u IPv4 34346 0t0 TCP *:27017 (LISTEN)

启动另外两台服务:

/usr/bin/mongod -f /etc/mongod_27018.conf

/usr/bin/mongod -f /etc/mongod_27019.conf

[root@node241 ~]# netstat -lntup | grep mongod

tcp 0 0 0.0.0.0:27017 0.0.0.0:* LISTEN 5581/mongod

tcp 0 0 0.0.0.0:27018 0.0.0.0:* LISTEN 5873/mongod

tcp 0 0 0.0.0.0:27019 0.0.0.0:* LISTEN 5912/mongod

加入系统开机自启动:

chmod 755 /etc/rc.local

echo "/usr/bin/mongod -f /etc/mongod_27018.conf" >>/etc/rc.local

echo "/usr/bin/mongod -f /etc/mongod_27019.conf">> /etc/rc.local

systemctl start rc.local

在配置服务器上配置三台Replica Set集群:

> use admin

switched to db admin

> rs.initiate({_id:"configrs0",configsvr: true,members:[{_id:0,host:"172.16.1.241:27017"},{_id:1,host:"172.16.1.241:27018"},{_id:2,host:"172.16.1.241:27019"}]})

{

"ok" : 1,

"operationTime" : Timestamp(1588121567, 1),

"$gleStats" : {

"lastOpTime" : Timestamp(1588121567, 1),

"electionId" : ObjectId("000000000000000000000000")

},

"lastCommittedOpTime" : Timestamp(0, 0),

"$clusterTime" : {

"clusterTime" : Timestamp(1588121567, 1),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

配置mongos路由服务:

[root@node240 ~]# cat /etc/mongos.conf

# mongod.conf

# for documentation of all options, see:

# http://docs.mongodb.org/manual/reference/configuration-options/

# where to write logging data.

systemLog:

destination: file

logAppend: true

path: /var/log/mongodb/mongod.log

# Where and how to store data.

# engine:

# mmapv1:

# wiredTiger:

# how the process runs

processManagement:

fork: true # fork and run in background

pidFilePath: /var/run/mongodb/mongod.pid # location of pidfile

timeZoneInfo: /usr/share/zoneinfo

# network interfaces

net:

port: 27017

bindIp: 0.0.0.0 # Enter 0.0.0.0,:: to bind to all IPv4 and IPv6 addresses or, alternatively, use the net.bindIpAll setting.

#security:

#operationProfiling:

#replication:

sharding:

configDB: configrs0/172.16.1.241:27017,172.16.1.241:27018,172.16.1.241:27019 #configrs0 是配置服务器的replica set 的名字

## Enterprise-Only Options

#auditLog:

#snmp:

启动服务:

[root@node240 ~]# /usr/bin/mongos -f /etc/mongos.conf

2020-04-28T18:24:16.158+0800 W SHARDING [main] Running a sharded cluster with fewer than 3 config servers should only be done for testing purposes and is not recommended for production.

about to fork child process, waiting until server is ready for connections.

forked process: 8413

[root@node240 ~]# lsof -i:27017

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

mongos 5813 root 10u IPv4 36513 0t0 TCP *:27017 (LISTEN)

mongos 5813 root 14u IPv4 35195 0t0 TCP node240:58632->172.16.1.241:27017 (ESTABLISHED)

mongos 5813 root 20u IPv4 36515 0t0 TCP node240:58638->172.16.1.241:27017 (ESTABLISHED)

将节点的副本集添加到mongos的路由中:

[root@node240 ~]# mongo

MongoDB shell version v4.0.18

connecting to: mongodb://127.0.0.1:27017/?gssapiServiceName=mongodb

mongos> use admin

switched to db admin

mongos> sh.addShard("rs0/172.16.1.242:27017,172.16.1.243:27017,172.16.1.244:27017"); #rs0是节点Replica Set的名字,这只添加了一个shard,所有的数据都会在这一个shard中,并且这个shard中的每个副本的数据都是一样的,在以上配置中,会自动选择PRIMARY节点,当shard中的主备切换时,会自动选择PRIMARY节点,不会中断

{

"shardAdded" : "rs0",

"ok" : 1,

"operationTime" : Timestamp(1588122970, 3),

"$clusterTime" : {

"clusterTime" : Timestamp(1588122970, 3),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

至此完成了所有服务器的配置,接下来开始配置具体collection的分片策略:

hash分片:

然后在mongos上为具体的数据库配置sharding:

sh.enableSharding("test") --允许test数据库进行sharding

mongos> sh.enableSharding("test")

{

"ok" : 1,

"operationTime" : Timestamp(1588123190, 3),

"$clusterTime" : {

"clusterTime" : Timestamp(1588123190, 3),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

sh.shardCollection("test.t",{id:"hashed"}) --对test.t集合以id列为shard key进行hashed sharding

mongos> sh.shardCollection("test.t",{id:"hashed"})

{

"collectionsharded" : "test.t",

"collectionUUID" : UUID("4f7a9aaf-207c-4b19-8216-b9a220334af3"),

"ok" : 1,

"operationTime" : Timestamp(1588125137, 10),

"$clusterTime" : {

"clusterTime" : Timestamp(1588125137, 10),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

通过db.t.getIndexes()可以看到自动为id列创建了索引。

mongos> db.t.getIndexes()

[

{

"v" : 2,

"key" : {

"_id" : 1

},

"name" : "_id_",

"ns" : "test.t"

},

{

"v" : 2,

"key" : {

"id" : "hashed"

},

"name" : "id_hashed",

"ns" : "test.t"

}

]

在mongos服务器上:

在上面针对test的t集合进行了分片配置,因此这里向t插入1000条数据做测试:

mongo --port=27017 --27017是mongos的端口号

use test

mongos> for(var i=1;i<=1000;i++) {db.t.insert({id:i,name:"Leo"})};

在shard的primary上使用db.t.find().count()会发现1000条数据近似均匀的分布到了3个shard上。

查看:

rs0:PRIMARY> use test

switched to db test

rs0:PRIMARY> db.t.find().count(); #172.16.1.243

1000

rs0:SECONDARY> db.t.find().count() #172.16.1.242

1000

rs0:SECONDARY> db.t.find().count() # 172.16.1.244

1000

使用db.t.stats()查看分片结果,

使用sh.status()查看本库内所有集合的分片信息。

ranged分片:

mongos> use test

switched to db test

mongos> sh.shardCollection("test.tt",{id:1}) # 对test.tt集合以id列为shard key进行ranged sharding

{

"collectionsharded" : "test.tt",

"collectionUUID" : UUID("f9e11c58-b34b-4cd2-a2a3-23db5e8fcc04"),

"ok" : 1,

"operationTime" : Timestamp(1588127190, 12),

"$clusterTime" : {

"clusterTime" : Timestamp(1588127190, 12),

"signature" : {

"hash" : BinData(0,"AAAAAAAAAAAAAAAAAAAAAAAAAAA="),

"keyId" : NumberLong(0)

}

}

}

ranged分片直接使用{id:1}方式指定即可,分片的chunk由mongos自主决定,例如在ranged分片集合中插入1000条数据,其结果如下:

mongos> for(var i=1;i<=1000;i++) {db.tt.insert({id:i,name:"Leo"})};

WriteResult({ "nInserted" : 1 })

在shard的primary上使用db.tt.find().count()会发现1000条数据近似均匀的分布到了3个shard上。

MongoDBv4.0.18 Sharding 高可用集群搭建 (CentOS7.2)全网独有

MongoDBv4.0.18 Sharding 高可用集群搭建 (CentOS7.2)全网独有